Because they now only need a sdi EQ and a Sdi cable driver. Where the previous chip they used was a sdi receiver + reclocker to 10bit parallel data that got straight into a hdmi cable driver.

Which is how almost all sdi > hdmi converters work. It is a proven concept and has the most optimized conversion based on latency and performance. Those converters where the only one that stayed cool and did not overheat nor could bake an egg on.

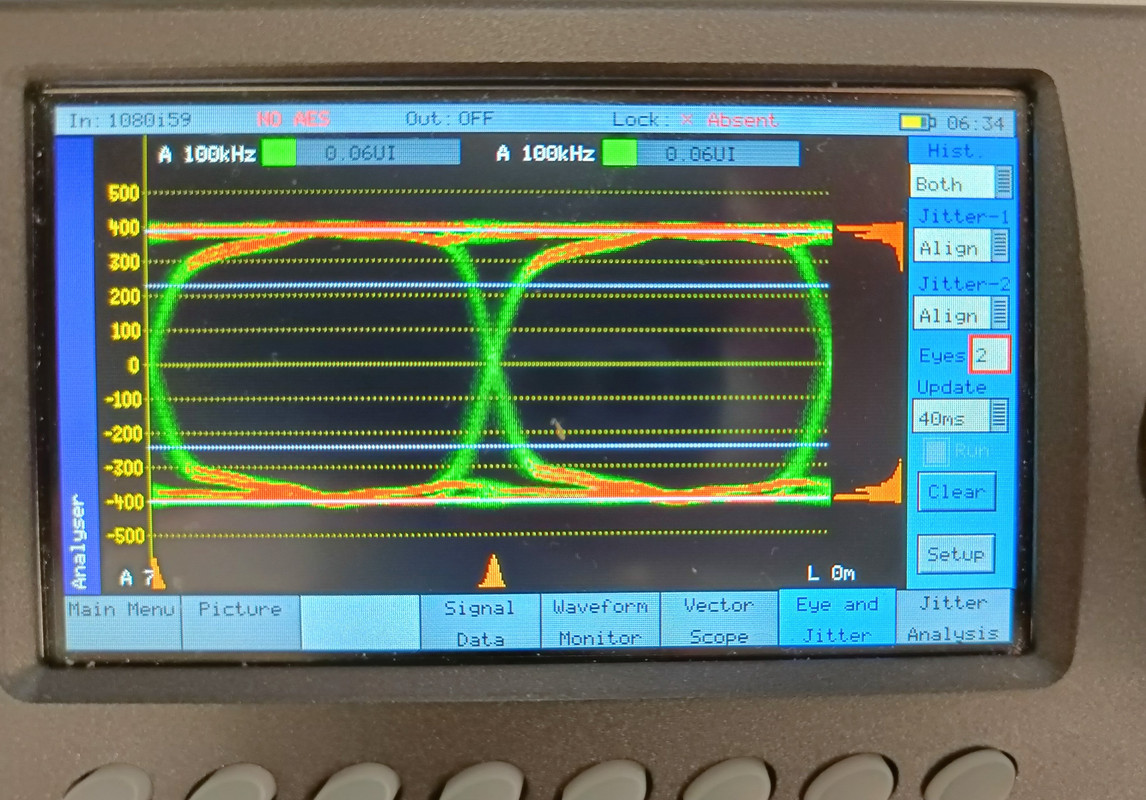

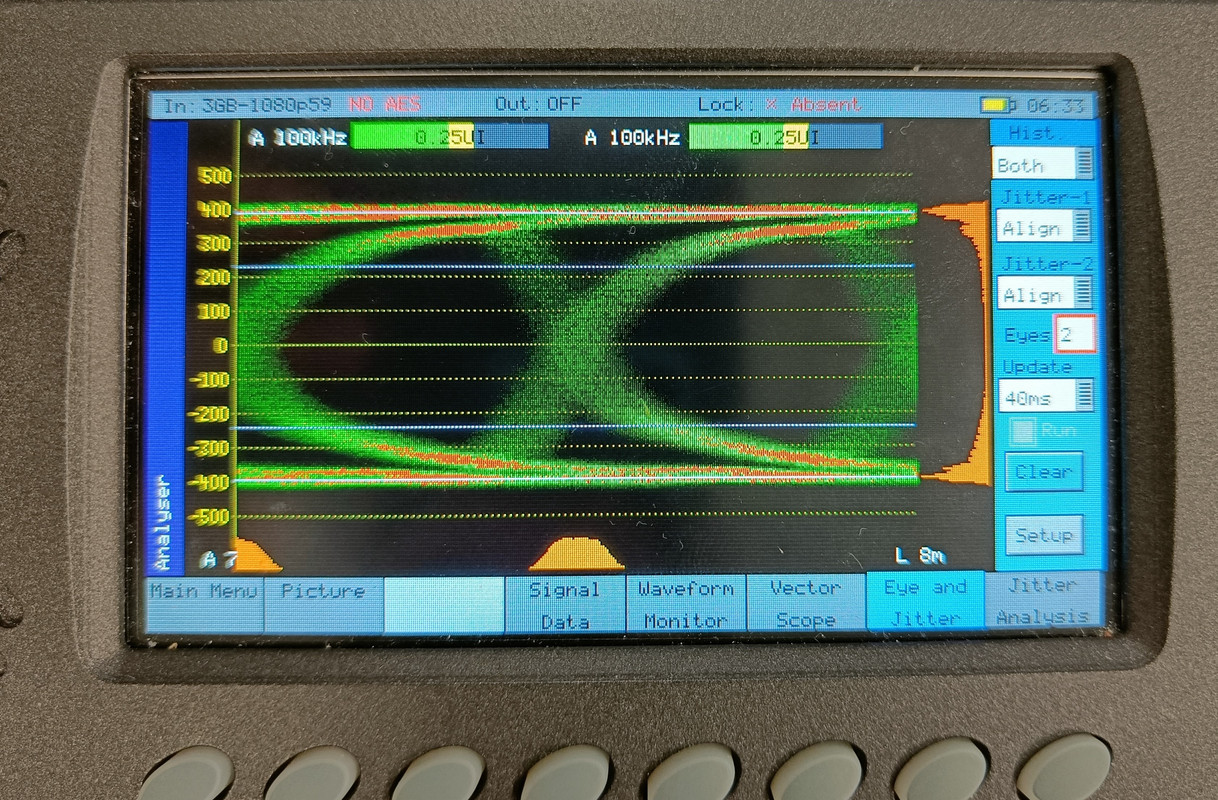

Yes I get the step we’re they went for a FPGA as it opens doors like LUT conversion etc. But the conversion is slower, a lot of users report problems with these devices not negotiating as well as the old ones with other hardware. And based on these eye diagrams the output drivers don’t look very promising.

Anyway I really admire what they done as they have been able to make a box with a pretty modern fpga inside that does sdi manipulation under 40 bucks which is quite amazing. Will need a few FW updates before it is running smooth for most users.

And based on my quick look at the datasheet of the output driver I don’t have high hopes they will be able to fix those output eyes very much. That output chip is very limited in what it can do in terms of optimalisation.

The more modern versions of these have all kinds of compensations that you can adjust.

Anyway at the moment with the current chip market situation. It’s better to have something then not be able to deliver your hardware product at all. We seized producing 2 products already due to this issue.